Praveen Palanisamy

Principal AI Engineering Lead,

Autonomous Systems,

Microsoft AI + Research

Praveen Palanisamy is a Lead Principal AI Engineer and Manager at Microsoft and works on building AI/ML-powered platform products for Autonomous Systems. His current focus is on building enterprise-grade Generative AI platform capabilities including a suite of custom applications that leverage Microsoft Copilot stack, Azure OpenAI, and OSS LLMs. Recently, he has been building Copilot/Agent-based platform components, automating LLMOps pipelines, memory-agumented Agents, pretraining/finetuning custom LMMs/LLMs and prototyping full-stack AI-Apps. He leads a cross-functional team to research and engineer platform and system components that leverage and empower customers with AI capabilities such as for Project AirSim in the Autonomous Systems, Business AI Incubation group, building an end-to-end (Perception + Scene-Understanding + Prediction + Planning + Control) autonomy platform for autonomous aerial robots and systems using Simulation, Planet-scale Synthetics, and Deep Reinforcement learning using Project Bonsai. Prior to that, he was an Autonomous Driving AI Researcher at General Motors R&D in Michigan, where, he developed planning and decision making algorithms and architectures using Deep Reinforcement Learning. He is the lead inventor of 70+ patents in the area of autonomous systems. He has authored two practical books – HOIAWOG and TensorFlow 2.x RL Cookbook for use by ML engineers, researchers, students and enthusiasts. He has worked at a few early-stage startups as a tech lead. He obtained his Graduate degree from the Robotics Institute, Carnegie Mellon University, and worked on Autonomous Navigation, Perception and Artificial Intelligence as a Research and Teaching Assistant.

50+ pending

50+ Companies including Google Deepmind, Tesla/Elon, Nvidia, Apple cite my name in their patents. See more impact highlights here

17+ Journal/Conference Papers

IROS, ITSC, IV, NeurIPS

20+ reviews as TPC/Reviewer

NeurIPS, ICML, TNNLS

ICRA, IROS, RA-L

7+ full-stack AI Agents & Apps

Team leader: 10+ direct reports, interns

Sr. AI Engineers, SWEs, UI/UX Devs, PhD interns

5+ direct Customer & Partner engagements

A modular, scalable, and open-source aerospace simulation platform. AeroSim integrates with industry-standard tools through standardized interfaces, enabling a range of use cases including aircraft engineering, aerospace software development, and AI/ML workflows for autonomy development. Features include Universal Scene Description (USD) for simulation assets, FMI support for custom dynamics models, robust middleware layer (Kafka/DDS) for standardized communication, and compatibility with high-fidelity renderers (UE5, Omniverse RTX). The project’s built using Rust + Python at the core, Unreal/Omniverse renderers, React/TypeScript UI, USD simulation assets, and Simulink integration for co-simulation. Dual-licensed under Apache 2.0/MIT (code) and CC BY 4.0 (assets).

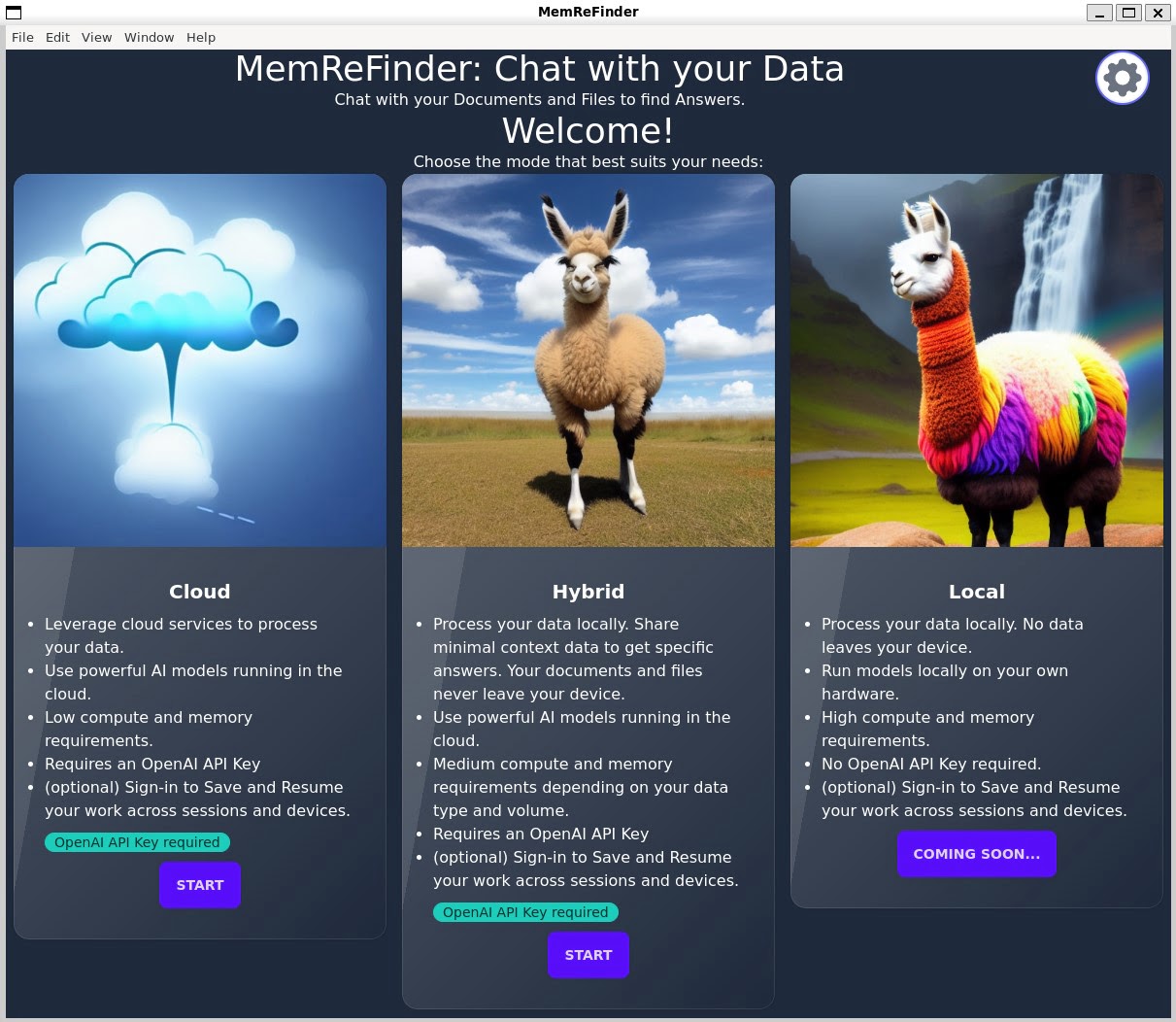

Cross-platform Memory and Retrieval-Augmented Finder (File Explorer) App to chat with your data to find answers powered by OpenAI-GPT/Llama2/Transformer.js models. You can load multiple DOCX, PDF, CSV, Markdown, HTML or other files and ask questions related to their content, and the app will use embeddings and LLMs to generate answers from the most relevant files and sections within your files. Leverages Vector DB for persistant Memory & Chats, supports asyncrhonous LLM streaming Ops and Conversation Orchestration. See project page for more details and how you can run or deploy on your own machine, cloud or hybrid environments.

This book contains easy-to-follow recipes for leveraging TensorFlow 2.x to develop artificial intelligence applications. Starting with an introduction to the fundamentals of deep reinforcement learning and TensorFlow 2.x, the book covers OpenAI Gym, model-based RL, model-free RL, and how to develop basic agents. You’ll discover how to implement advanced deep reinforcement learning algorithms such as actor-critic, deep deterministic policy gradients, deep-Q networks, proximal policy optimization, and deep recurrent Q-networks for training your RL agents. As you advance, you’ll explore the applications of reinforcement learning by building cryptocurrency trading agents, stock/share trading agents, and intelligent agents for automating task completion. Finally, you’ll find out how to deploy deep reinforcement learning agents to the cloud and build cross-platform apps using TensorFlow 2.x.

Premier-TACO is a novel multitask feature representation learning methodology for enhancing few-shot policy learning in sequential decision-making tasks. It pretrains general feature representations using a subset of relevant multitask offline datasets, which can then be fine-tuned for specific tasks with minimal expert demonstrations. Building upon temporal action contrastive learning with an efficient negative sampling strategy, Premier-TACO achieves remarkable performance improvements: 101% over learn-from-scratch baselines and 24% over previous state-of-the-art pretraining methods on DeepMind Control Suite, and similar advantages on MetaWorld benchmarks. Link to Project page, Code and Paper

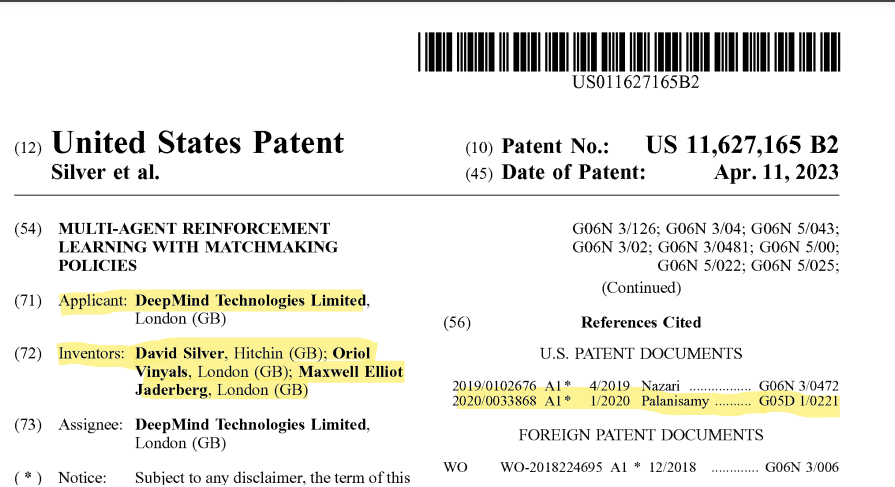

Google Deepmind’s Multi-agent reinforcement learning with matchmaking policies patent which was granted on 2023-04-11 cites 3 patents in total (including patent examiner’s citations) and one of them is my patent application US20200033868A1 which was granted in 2020-11-24. The fact that the invention by David Silver ( led AlphaGo, AlphaZero, etc.), Oriol Vinyals (led AlphaStar, AlphaCode) and Max Jaderberg cites my work, motivates me as my work is indeed impactful.

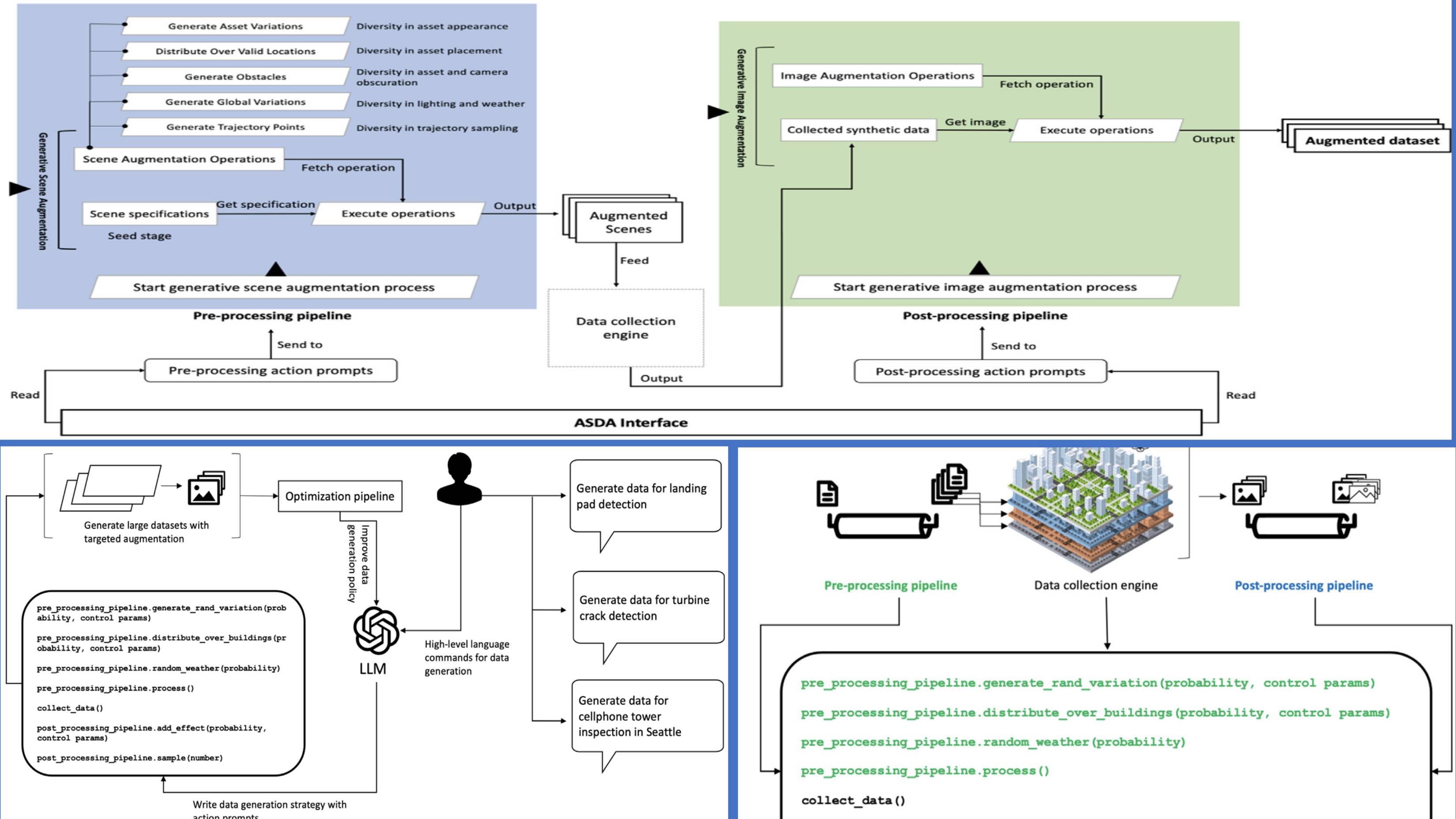

A paper on prompt-based, procedural generation of diverse datasets for training AI models for aerial autonomy applications. Published in Robotics and Autonomous Systems journal, Volume 166. Link to summary video:https://www.youtube.com/watch?v=eKpOh-K-NfQ. Link to paper.

Contributed to IEEE 3129 Standard as a member of the IEEE 3129 Standard for Robustness Testing and Evaluation of AI-based Service working group as part of the AI Standards Committee of the IEEE Computer Society, which developed the IEEE standard 3129. The standard is now approved and published. The standard is aimed at providing a framework for testing and evaluating the robustness of AI-based services.

Spoke at the Commercial Unmanned Aerial Vehicle (CUAV) news webinar on Transforming Infrastructure Inspection with Simulation and Autonomy. I was joined by John McKenna, Co-Founder & CEO of sees.ai and Timothy Reuter from Microsoft. Discussed a few key aspects on how running high-fidelity simulations at scale can accelerate mission planning, software & AI/ML model development and iteration cycles. Leveraging AI and the Autonomy Building blocks including pre-trained models that can be fine-tuned to build custom autonomy modules is a key enabler for accelerating the journey towards aerial autonomy. I also covered some of the key features and focus areas of the Microsoft Project AirSim platform that enables the entire end-to-end pipeline for aerial autonomy. I went over two specific application scenarios: 1. Cell Tower inspection and 2. Bridge inspection. Link to the Webinar page. Link to the recording of the webinar. A snapshot summary is available in the webinar handout slides. Post on LinkedIn.

I’m happy to announce that my book, HOIAWOG! “Hands-On Intelligent Agents with OpenAI Gym: Your guide to developing AI agents using deep reinforcement learning”, made it to the Best Reinforcement Learning eBooks of All Time! compiled by BookAuthority. BookAuthority collects and ranks the best books in the world, and it is a great honor to get this kind of recognition. Thank you for all the reader’s support! You can learn more about the HOIAWOG book here. The source code for all the agents, algorithms and implementation details are available on GitHub. You can get a copy of the book from Amazon.

Building blocks for Autonomous Systems

Jun. 2019 - PresentDeep RL for Autonomous Driving

Jan 2016Autonomous Navigation, Perception & Deep Learning

Aug 2014