Work-In-Progress draft.

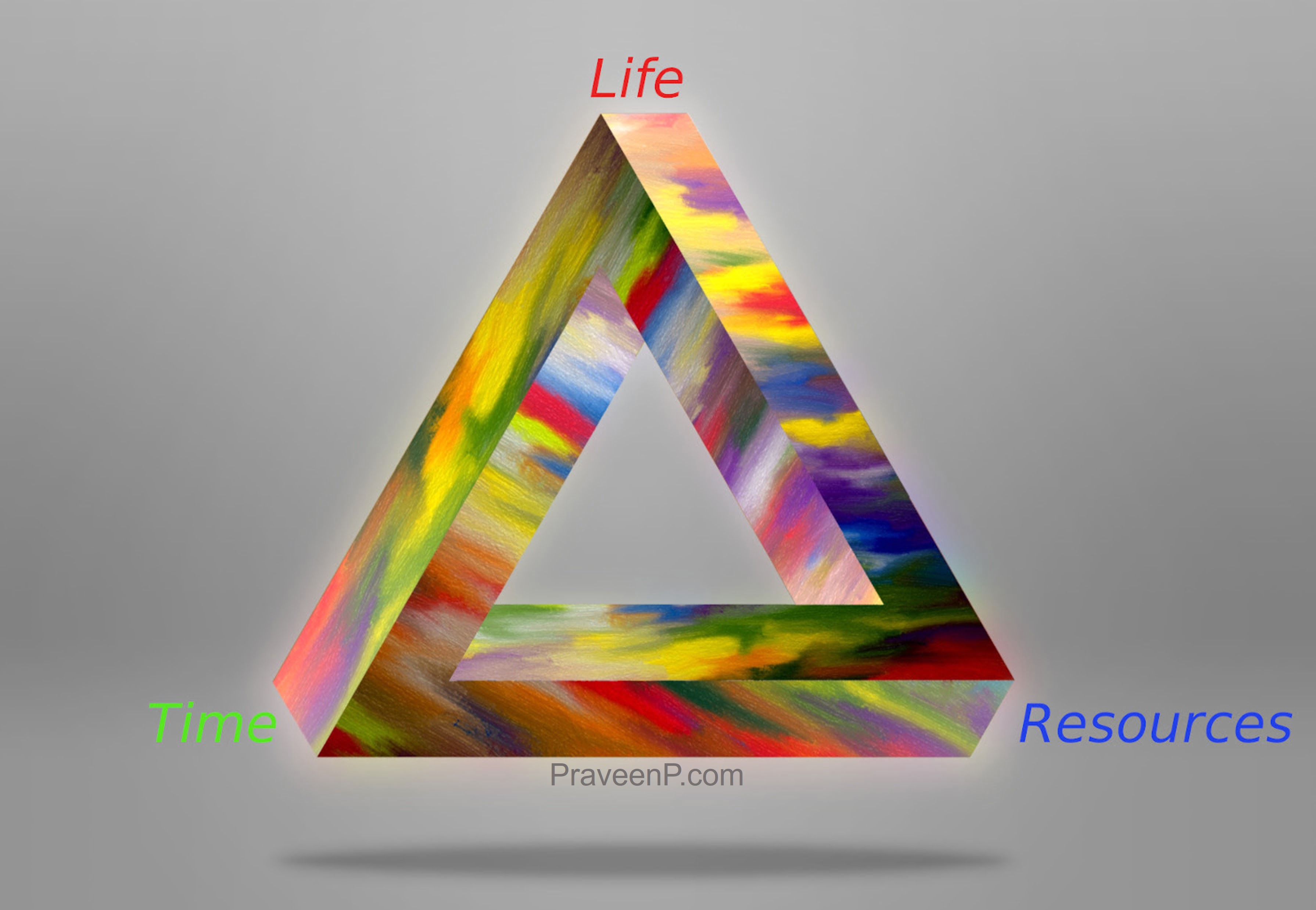

Maximizing the Life-Time-Resources is an optimization that every human being runs one way or the other. Time can be traded for Resources and vice-versa. We have only got one (Physical) Life now.

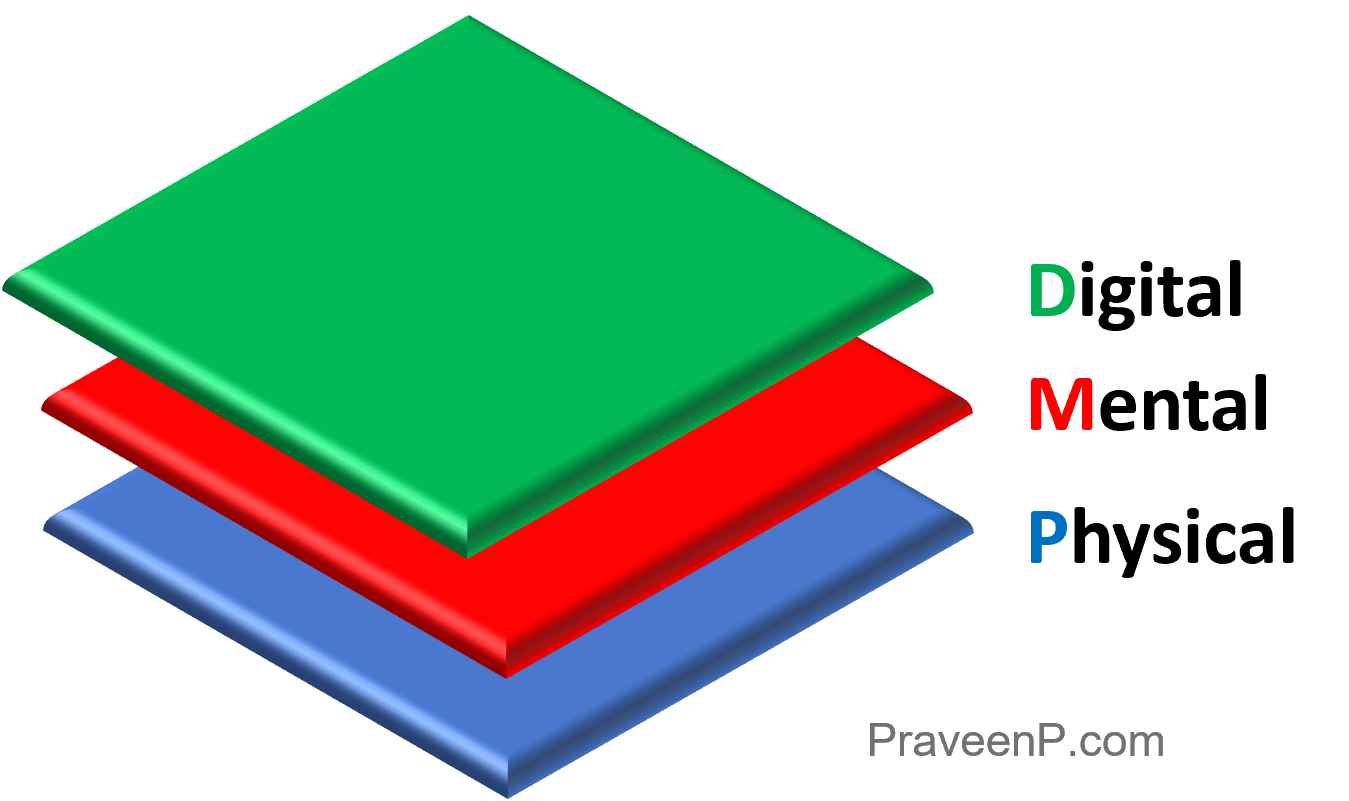

Our reality is made up of three layers: Mental, Digital and Physical.

It used to be the Physical world. It is increasingly becoming a combination of the Physical and the Digital world. The Mental world is what a human persists and believes to be the Real world.

Our imaginations/Mental-model led us to create an extension to the Physical world. The Digital world offers another extension through Virtual/Augmented reality. Our Mental layer ultimately defines what we believe in and guides our actions. Our Mental layer is private by default.

As humans, we have one Physical Life but, we have the ability to create multiple Digital Agents that can continue to live long after our Life, Time and Resource runs out.

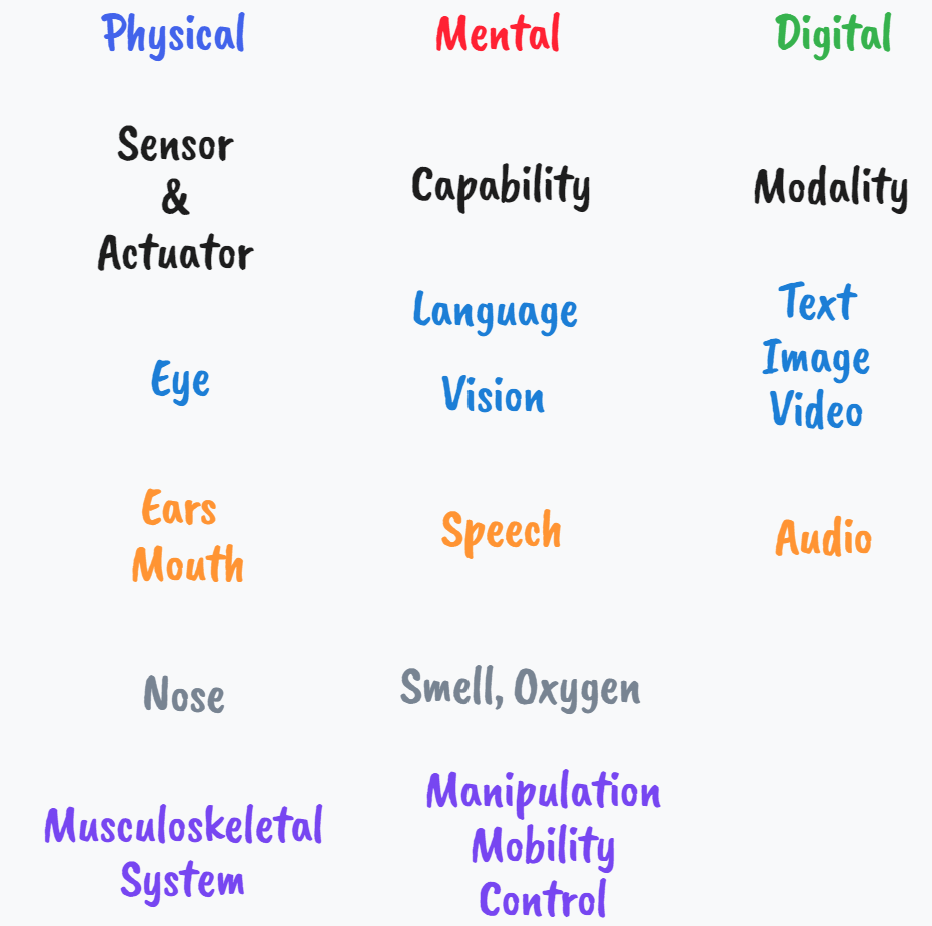

Computers were always good at solving numerical tasks and enhancing human lives. Programming computers required humans to write in a particular machine language like C++, Python, JavaScript or (web) assembly. With Artificial Neural Networks and Deep Learning, we built an abstraction layer on top of the computer hardware to enable us to program the computers using (passive) sets of data. We are getting closer to human’s natural way of interactions, by using images and natural language. Instead of making humans learn the machine language, we are able to make the machines learn the human languages. When the Deep Learning methods evolve to a point where commanding the computer to do any/new task can be done through natural, live (few-shot) interactions, we will be close to creating artificially intelligent creatures that take computer hardware as their Physical form (instead of human bodies). Some of those creatures could stay embodied in a robot while some others could stay in a human accessory like a phone or glasses or even as an implant inside the human body. At that point, those creatures would be an extended physical/digital Agents to enhance/preserve human being’s Life, Time and Resources. Every human could benefit from the opportunity to focus on self-actualization and self-realization if not self-enlightenment

In Reinforcement Learning (RL), an Agent optimizes for maximizing the expected sum of discounted future rewards in a Markov Decision Process (MDP) which requires the Agent to interact with its environment to learn, act and adapt/improve. RL has a lot of analogies drawn from human psychology. It is a simple but powerful formulation that is applicable to Human life at large.

Learning to optimize for maximizing Life-Time-Resources/Long-Term-Rewards using the Mental-Digital-Physical/Markov-Decision-Process Framework as a Human/Agent is the Goal.

Reward is enough? TBD

Creating and executing ideas that cause physical changes is a key to attain human-level intelligence. A reward signal should point towards creativity for Artifical Intelligence(AI) beyond human-level, general intelligence. AI Agents should collectively figure out what the Goal is (if there is one) and what the Reward is (if it is enough).